From Tokens to Work.

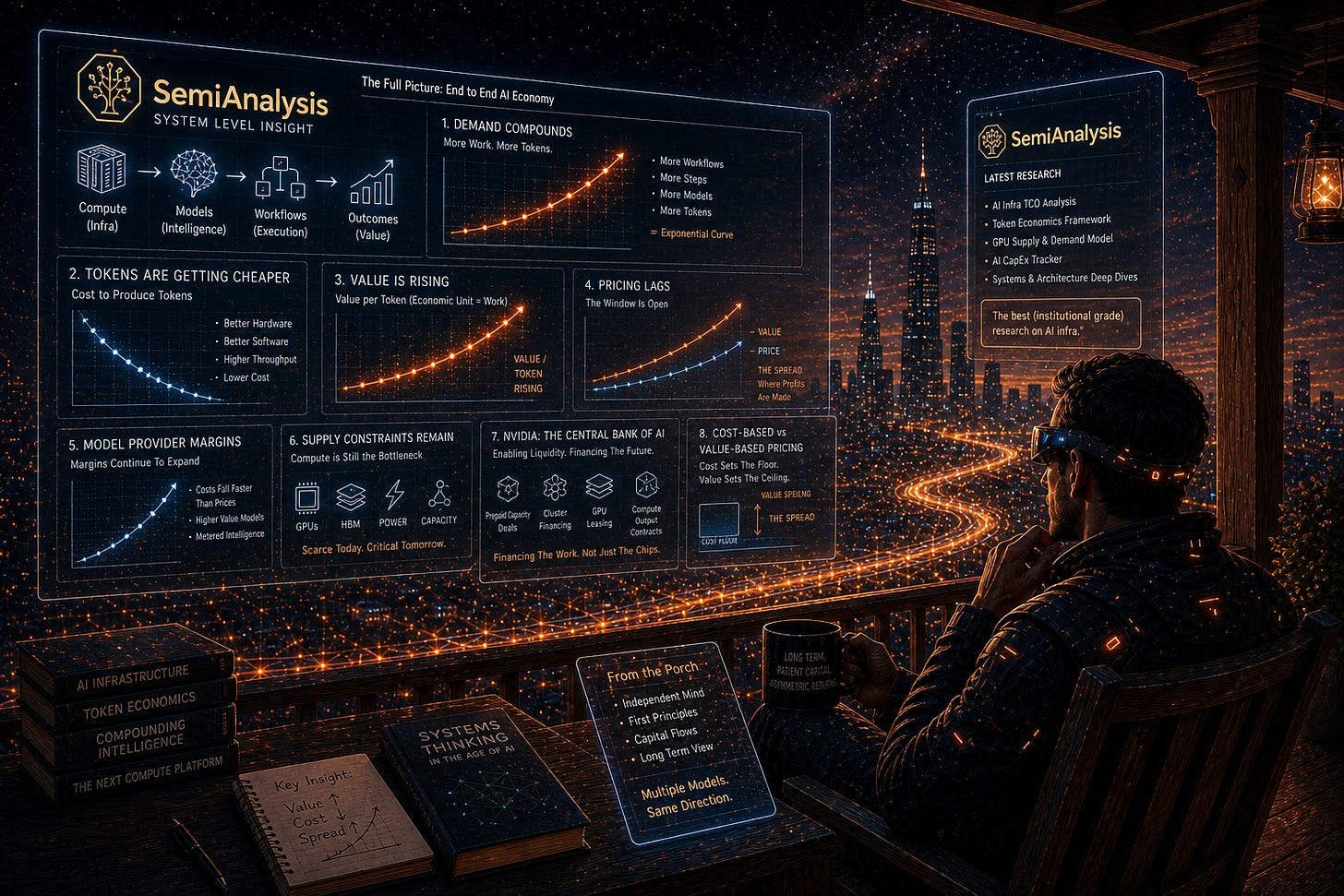

Reading SemiAnalysis so you don't have to (but you should). Tokens = work, value > cost, spread's the story.

If you’re not reading Dylan Patel and the team at SemiAnalysis, go here now and sign up. (And then come back, please.)

IMO (and I don’t think I’m alone), they’re the best (institutional-grade) research on AI infra out there. For the purposes of staying out of a competition I won’t win, I’m considering myself “pro-grade,” which is a notch below “institutional-grade” (because I made it up). Anyway, SemiAnalysis put out a piece last week that I read and loved, and not without a bit of envy because it was one of the clearest end-to-end explanations of what’s happening in AI right now.

I encourage you to read it; but, just in case, TLDR:

Demand compounds – agentic AI turned tokens into units of real economic work.

Costs fall – hardware / software advances are crushing the cost to produce tokens.

Value rises – tokens now drive real ROI, so the work they produce is worth more.

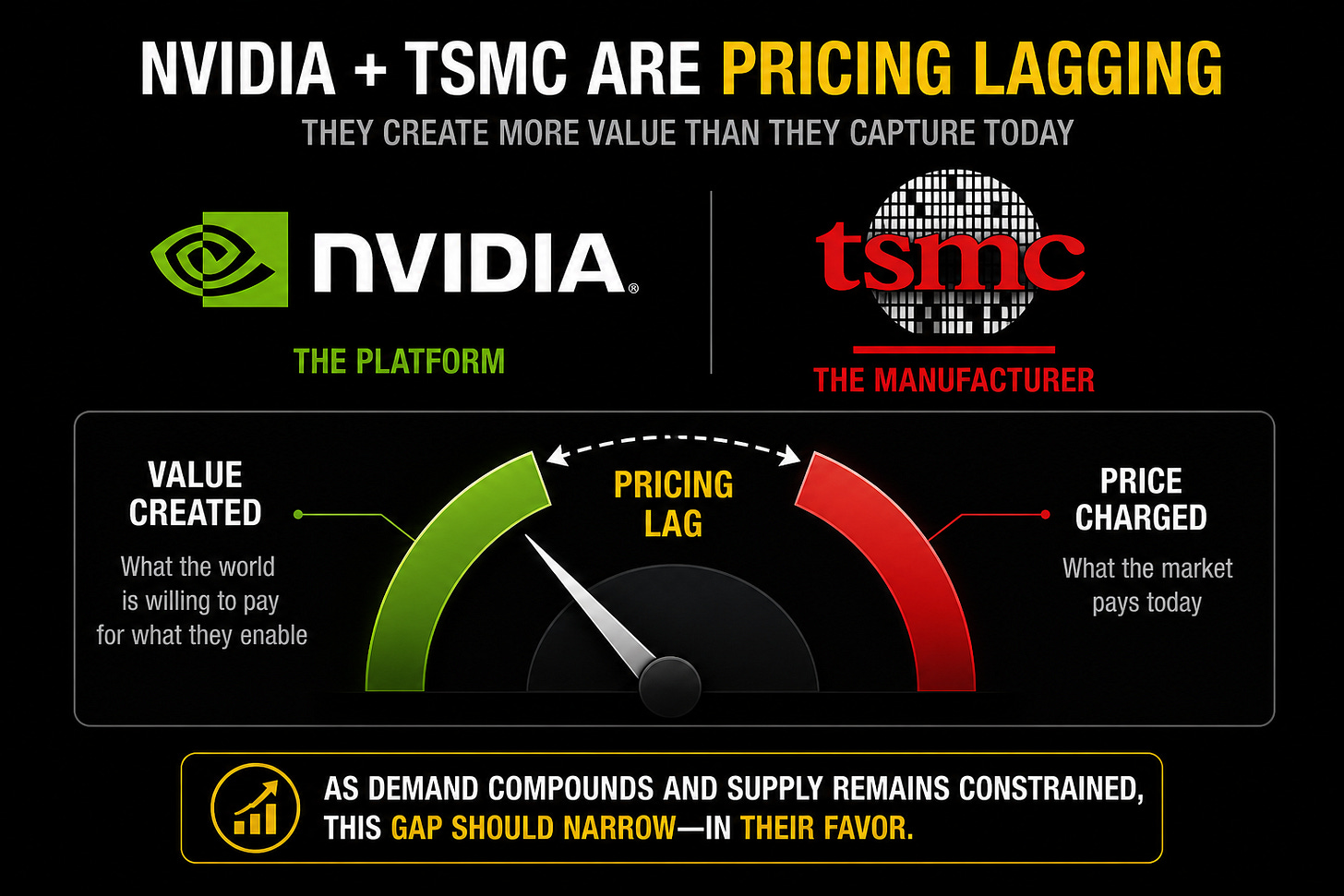

Pricing lags – NVDA / TSMC (ironically) haven’t fully caught up to the new economics.

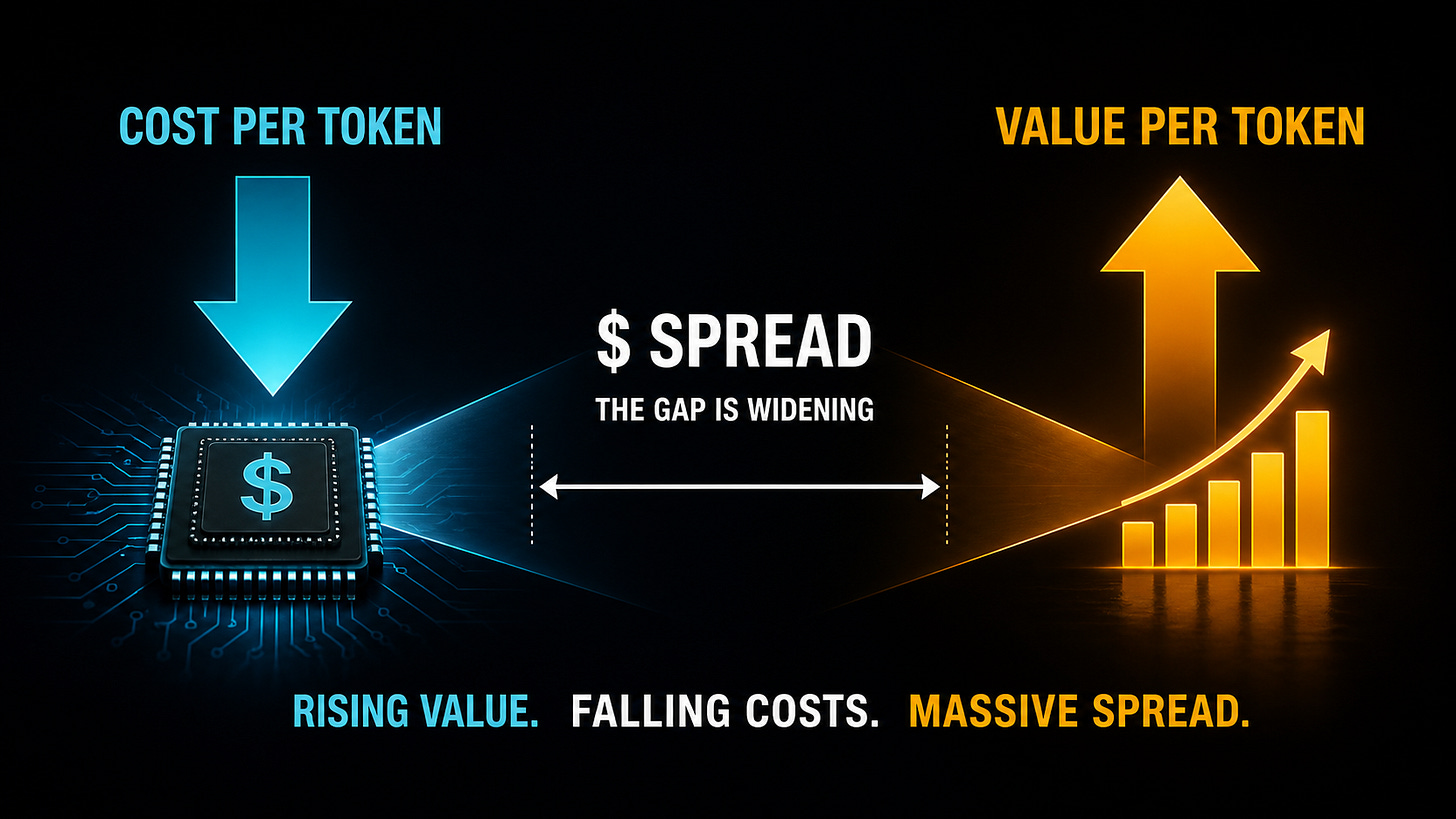

The spread is the story – rising value versus falling cost is where profits live.

Let’s look at eight (most?) of their points one at a time….

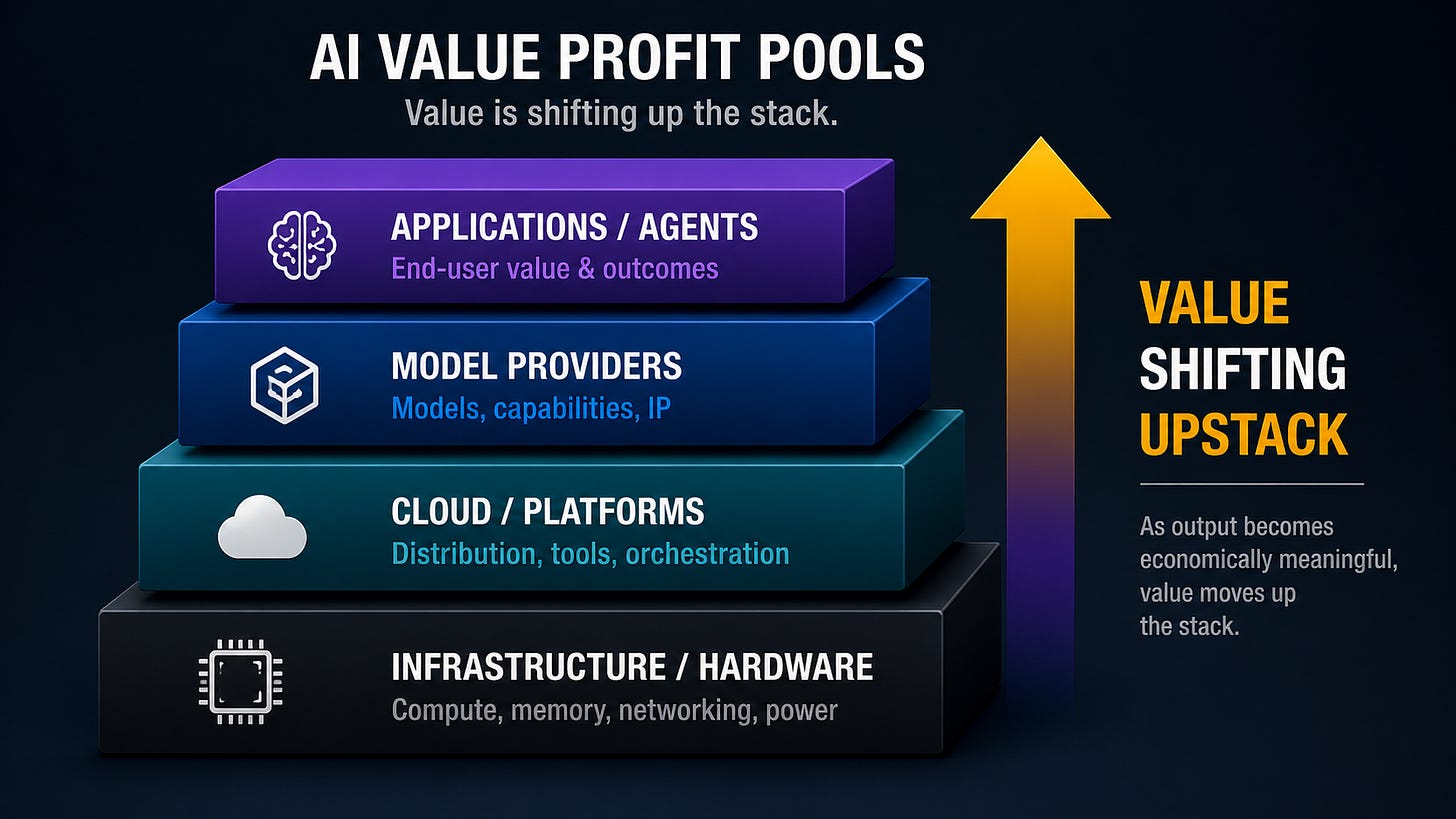

One: “AI Value Profit Pools” – Value follows the bottleneck. Now it’s moving up the stack.

2023 to now has been the infrastructure trade. GPUs, power, memory… if it touches a bottleneck, it works. (Fwiw I remain bullish on this trade; neither the thesis nor my position should be news.) Meanwhile, model labs have struggled to show real utility or strong margins.

What’s changed is that the (model) output is now economically meaningful. Once that happens, value naturally moves up the stack. The stack is a moving system with constraints shifting, capital following those constraints, and value pooling where the bottleneck sits. For more (from me) on that see: here re memory, here and here re CPUs, and here re capital.

At the end of the day, as AI starts doing the work, this is a capital shift from labor to compute.

Before everyone soils their collective pants, this doesn’t mean AI is “taking all the jobs.” It means productivity rises, capital reallocates, and new jobs emerge around the new system. (I unpacked that here; and I also had ChatGPT write a newspaper front page From the Porch Future ← the USAi Tomorrow, natch.)

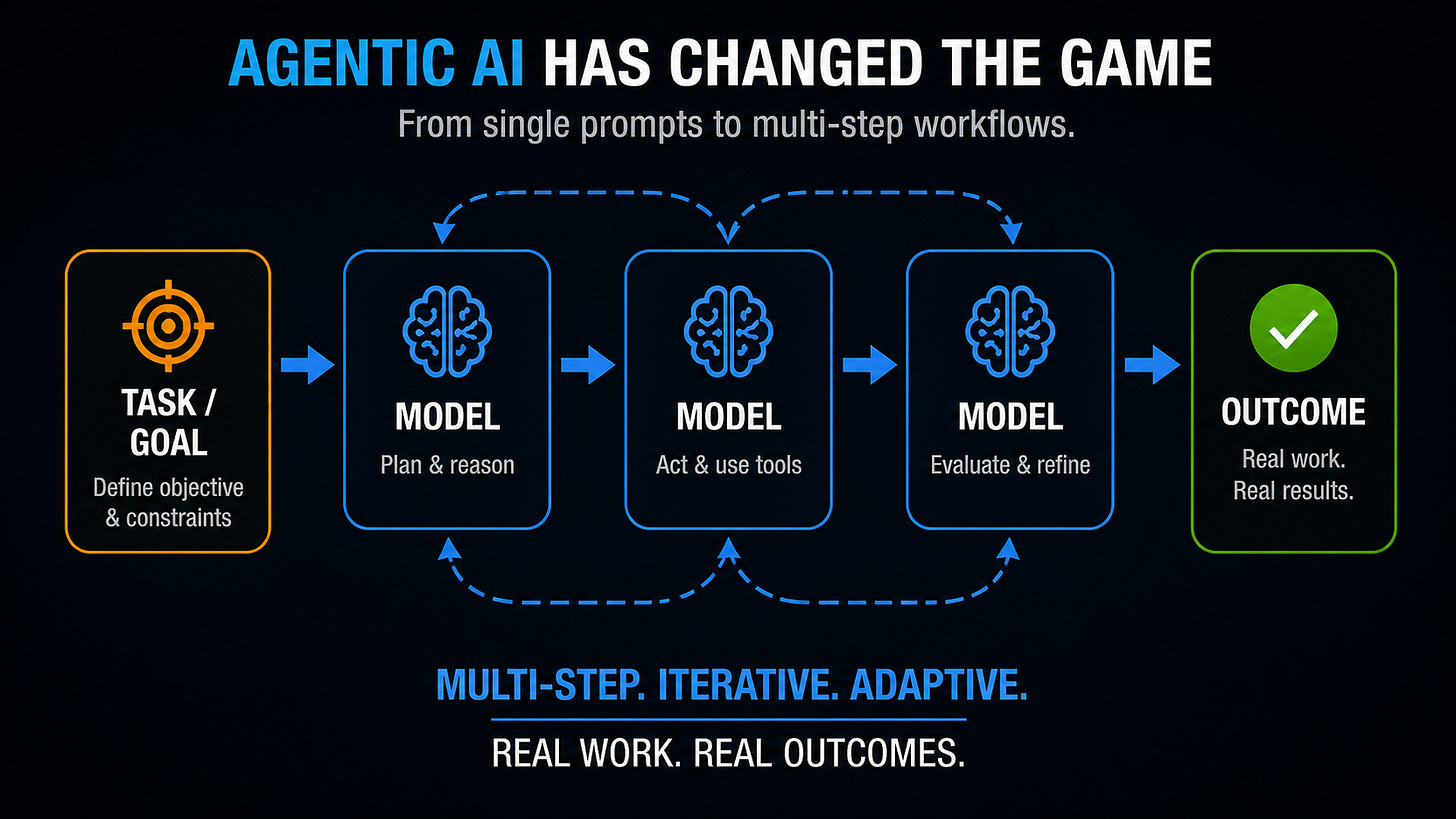

Two: “Agentic AI Has Changed the Game” – Demand is work.

As discussed (woefully ad nauseum) by me (starting here): AI has gone from a (much) better chatbot to a system for doing work: multi-step workflows, models calling models, and tasks collapsing from hours to minutes. Once you view it that way, demand stops being about users or seats and becomes about work and workflows. (SemiAnalysis themselves said they’re running ~$10.95M/year on Claude — about 30% of total comp. That’s not just a chatbot habit.)

Last week in OpenAI Didn’t Overbuy Compute, I tried to frame demand as:

Revenue = price × workflows × (tokens / workflow)

Agentic systems push on all three, especially that last term.

Three: “Tokens Are Getting Cheaper to Produce” – The unit got cheaper. The workload got bigger.

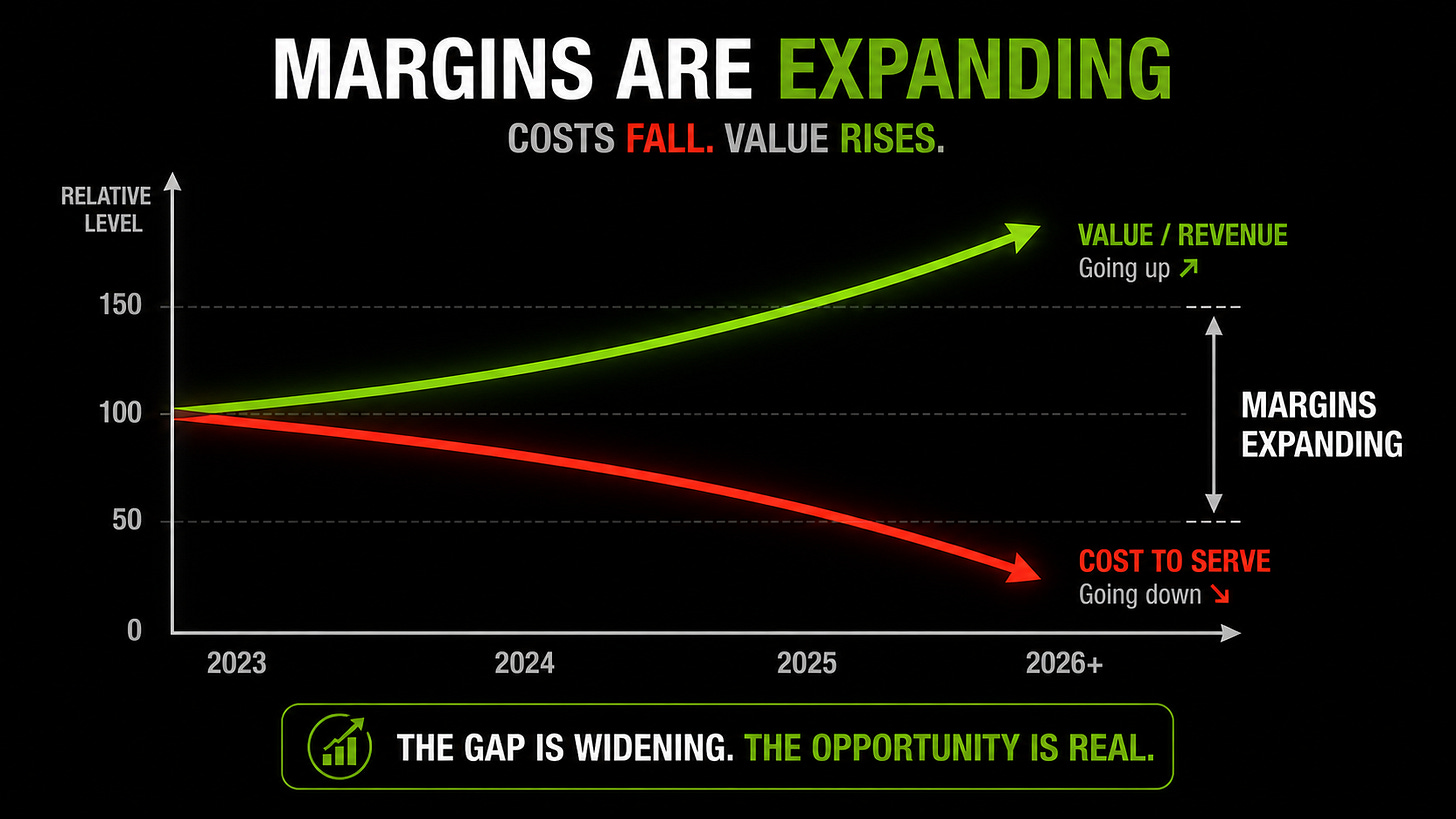

At the same time, the supply side is improving rapidly. Blackwell is now generating ~30x more tokens/sec/GPU than Hopper did a year ago (as per SemiAnalysis and, well, Nvidia). Throughput gains like that are driving down the cost per token at a remarkable pace, which under a traditional lens, should compress margins, but hasn’t — because the (real) unit that matters is the work the tokens enable. (See here again re these economics.)

Four: “Model Provider Margins Will Continue to Increase” — Cheaper to make, sold for more — and the mix keeps tilting up.

Even as headline pricing comes down, margins are expanding, costs are falling faster than prices, and usage is shifting toward higher-value models. That only makes sense if pricing is moving toward value, not cost. SemiAnalysis noted that Anthropic ARR went from $9B to $44B+ this year, with inference gross margins moving from <40% to 70%+ over the same window.

Let’s call it “metered intelligence.”

Five: “Why Model Provider Profits Won’t Get Competed Away” — Scarcity breaks the commodity model.

The (a?) bear story is “competition will compress margins.” But frontier performance still matters, and compute is still (wildly) supply constrained. That combo breaks the usual commodity dynamic. See here re CPU scarcity and basically anywhere from (at least) here forward re GPU scarcity (which still exists).

Six: “Agentic AI Hits the Market, but Nvidia and TSMC Haven’t Flinched” — Value moved. Pricing hasn’t (yet).

The irony of all of this downstream value creation (for now) is that upstream pricing hasn’t fully followed (for now). I.e., the system is creating more value than it’s currently charging. N3 utilization (TSMC’s leading-edge 3nm process — what every AI chip is built on) is expected to top 100% in 2H26. DRAM fabs are already running over 90%. (See here re the HBM crunch.) Pure scarcity — and sticker prices still haven’t moved.

I started talking about GPU / AI infra economics here. The numbers have changed, but the thesis remains intact.

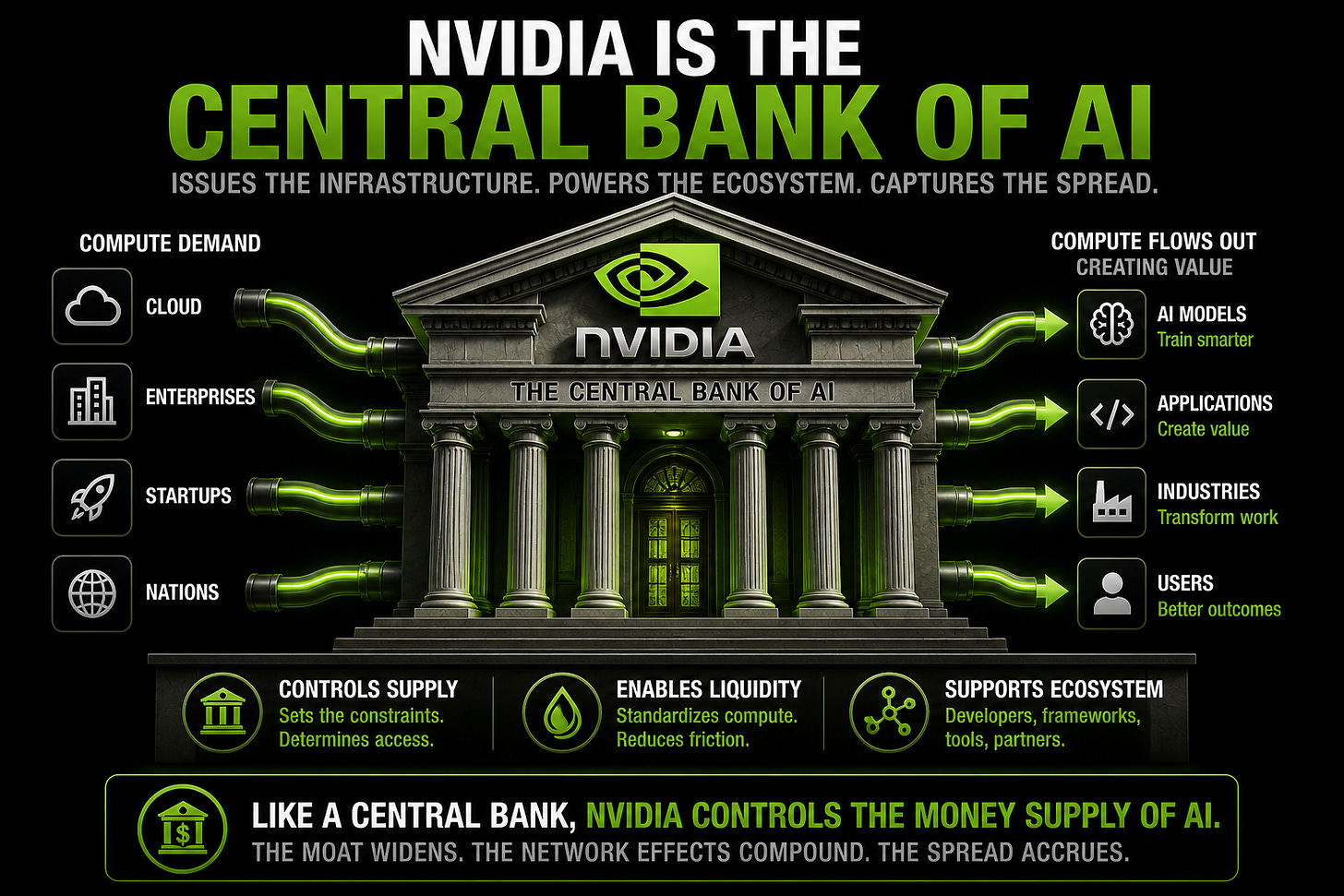

Seven: “Nvidia as the ‘Central Bank of AI’” — From selling chips to financing the system (From the Porch).

Nvidia is managing the entire system right now: controlling supply, enabling liquidity, and supporting the broader ecosystem. Not maximizing every dollar today; instead, ensuring the system expands in a way that keeps it at the center. I framed this more mechanically here: TLDR: once compute becomes a financial asset, the system stops selling GPUs and starts financing the work those GPUs produce.

You can already see it in prepaid (seemingly circular?) capacity deals, cluster financing, GPU leasing, and contracts written against future compute output. What looks messy today is just the early plumbing of a new financial layer forming around compute.

(You can also see another USAi Tomorrow future frontpage re the “pending Federal Compute Bank Token Market Intervention” in 2036 here.)

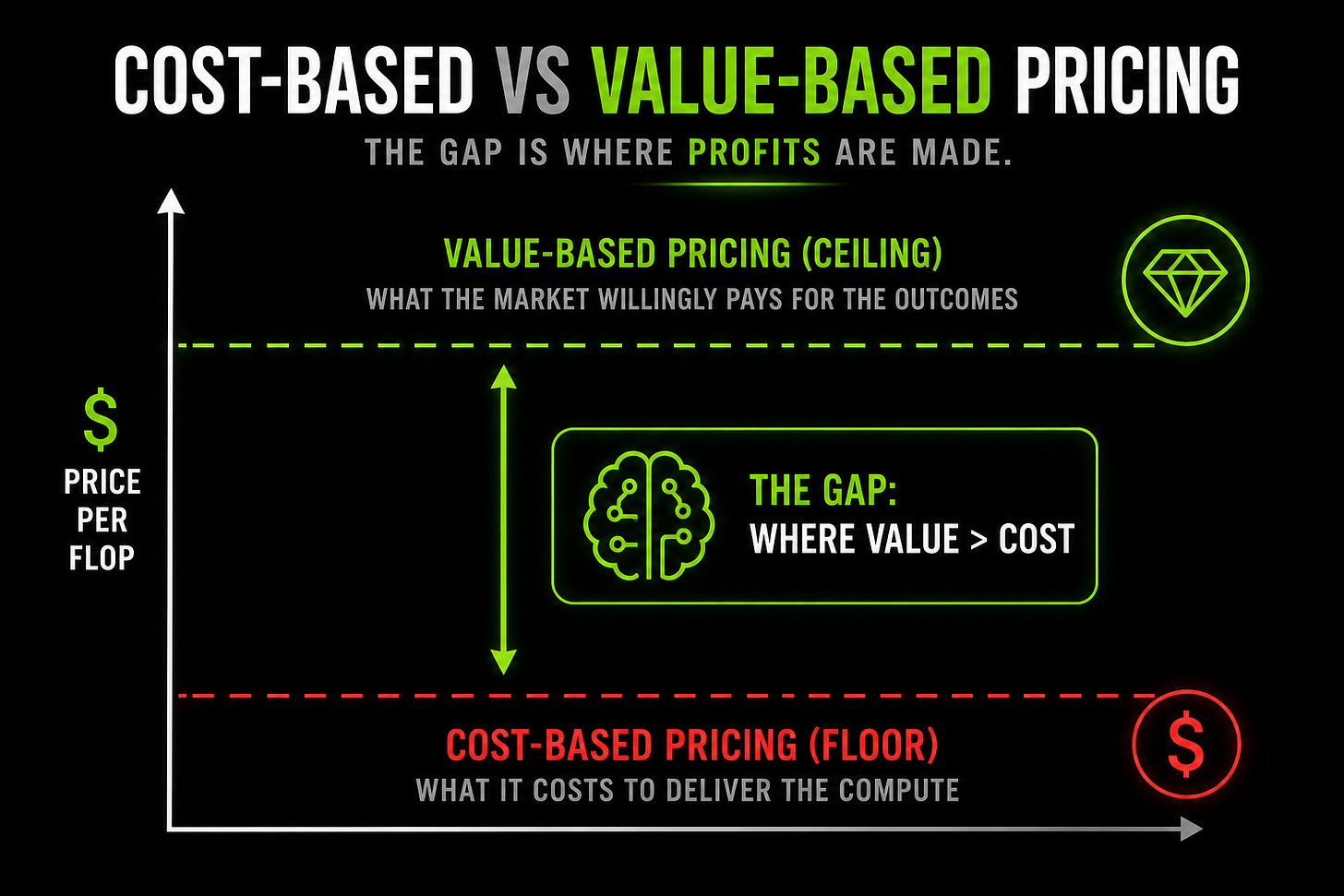

Eight: “Cost-Based vs Value-Based Pricing” – The spread is where the money’s at.

Cost-based pricing sets the floor. Value-based pricing sets the ceiling. And right now, the gap between the two is large. Which implies the next phase is about who captures that spread. (Spoiler alert: the model layer is taking a bigger share – alongside, not instead of, infrastructure.)

Follow the capital, From the Porch.

So, yes: demand compounds, costs fall, pricing lags, and value rises. That gap is where it’s at.

AI is being priced like labor.

And capital is already moving to match it.

Disclaimer: I used a shit ton of AI on this. Mostly to fact-check myself against the source — turns out I was mostly right (or at least aligned), which was either validating or terrifying depending on the hour. The thinking is mine. The receipts are SemiAnalysis's. And ChatGPT, Gemini, and Claude helped me make sure I wasn't lying to you (or myself).